ClusterEngine | string | | Compute cluster engine (sge or slurm). |

ClusterQueue | string | | Queue to submit jobs. |

ClusterUser | string | | Submit username. |

ClusterMaxWallTime | number | | Maximum allowed clock (wall) time in minutes for the analysis to run. |

ClusterMemory | number | | Amount of memory in GB requested for a running job. |

ClusterNumberCores | number | 1 | Number of CPU cores requested for a running job. |

ClusterNumberConcurrentAnalyses | number | 1 | Number of analyses allowed to run at the same time. This number if managed by NiDB and is different than grid engine queue size. |

ClusterSubmitDelay | number | | Delay in hours, after the study datetime, to submit to the cluster. Allows time to upload behavioral data. |

ClusterSubmitHost | string | | Hostname to submit jobs. |

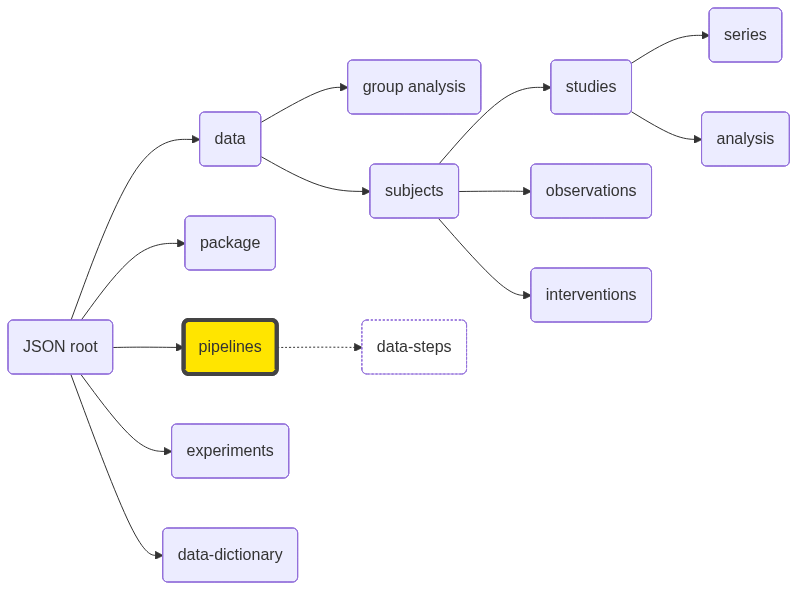

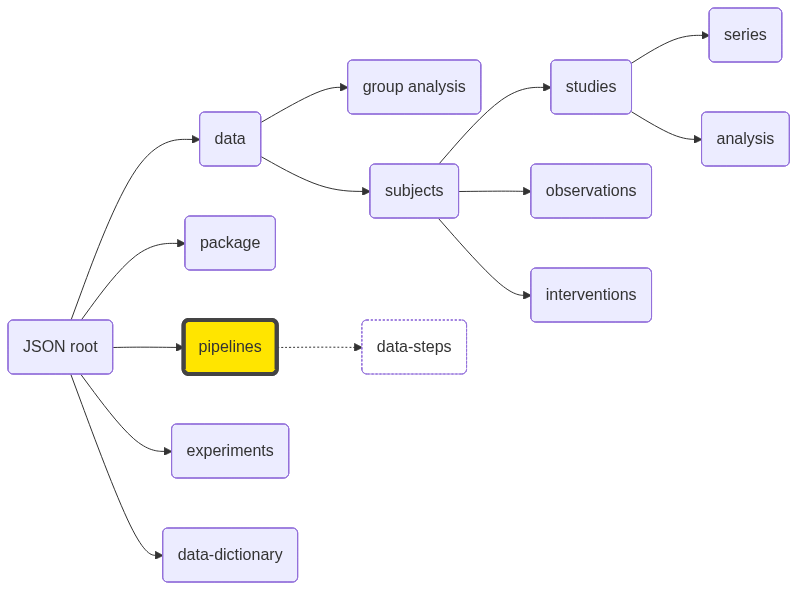

PipelineAnalysisLevel | number | 🔴 | subject-level analysis (1) or group-level analysis (2). |

PipelineCompleteFiles | JSON array | | JSON array of complete files, with relative paths to analysisroot. |

PipelineCreateDate | datetime | 🔴 | Date the pipeline was created. |

PipelineDescription | string | | Longer pipeline description. |

PipelineDirectory | string | | Directory where the analyses for this pipeline will be stored. Leave blank to use the default location. |

PipelineDirectoryStructure | string | | |

PipelineName | string | 🔴 🔵 | Pipeline name. |

PipelineNotes | string | | Extended notes about the pipeline |

PipelinePrimaryScript | string | 🔴 | See details of pipeline scripts |

PipelineSecondaryScript | string | | See details of pipeline scripts. |

PipelineResultScript | string | | Executable script to be run at completion of the analysis to find and insert results back into NiDB. |

SetupDataCopyMethod | string | | How the data is copied to the analysis directory: cp, softlink, hardlink. |

SetupDependencyDirectory | string | | Where the dependency pipeline is placed relative to the analysisroot. root or subdir |

SearchDependencyLevel | string | | |

SearchDependencyLinkType | string | | |

SearchGroup | string | | ID or name of a group on which this pipeline will run |

SearchGroupType | string | | Either subject or study |

SearchParentPipelines | string | | Comma separated list of parent pipelines. |

SetupTempDirectory | string | | The path to a temporary directory if it is used, on a compute node. |

SetupUseProfile | bool | | true if using the profile option, false otherwise. |

SetupUseTempDirectory | bool | | true if using a temporary directory, false otherwise. |

Version | number | 1 | Version of the pipeline. |

DataStepCount | number | 🟡 | Number of data steps. |

VirtualPath | string | 🟡 | Path of this pipeline within the squirrel package. |

| data-steps | JSON array | | See data specifications |